Machine translation

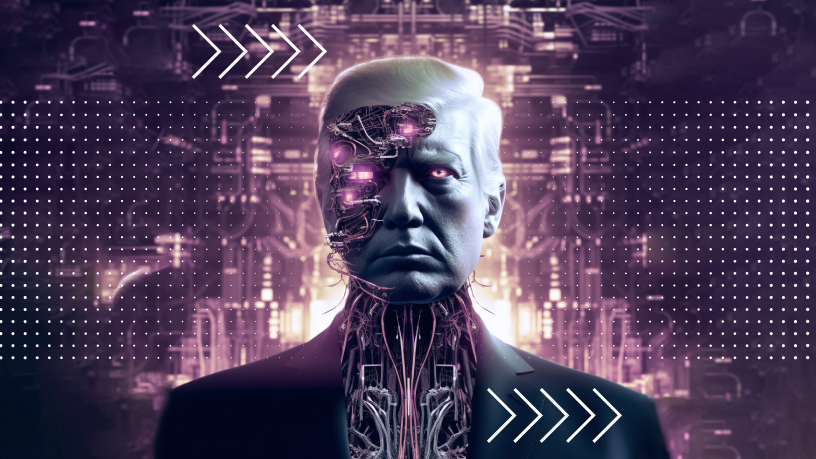

Artificial Intelligence development leaders compare AI to pandemics and nuclear war. Are they genuinely wrong, or do they benefit?

On May 30, the Center for AI safety published an open letter. It consists of one sentence: “Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.” The letter has already been signed by almost all the leaders of AI. (With some important exceptions, for example, one of the fathers of modern AI, Yann LeCun, has not signed it.) Is it really that dangerous and can AI really be compared to such threats as pandemics and nuclear war?

A brave new world

It all started on March 14, when OpenAI released ChatGPT 4. As you can see by the version number, there were already 2, and 3, and 3.5 (this version was released in November 2022). But somehow there was neither such a panic nor such a hype. And right away, before people had time to properly enjoy ChatGPT 4 (only a week has passed), on March 22, Elon Musk and colleagues on the website of the Institute for the Future of Life launched open letter, where solid people called to stop the development of Large Language Models (LLM), such as GPT-4.

From there, things escalated. On May 11, the European Union passed the AI Act, a prototype law regulating and restricting the use of AI. The G7 summit also discussed AI. AI leaders have become as popular as rock stars. Sam Altman (CEO of OpenAI) has become recognizable. He (and other AI developers and representatives of major IT companies) were called to the White House for a meeting with the vice president. Then Altman performed in the Senate, saying he was going on a tour of cities and countries to explain what AI is and how dangerous it is.

For the last month, Altman has been giving non-stop interviews and saying everywhere that AI should be regulated, better at the international level, that something like the IAEA should be set up, only not about nuclear energy, but about AI. (The letter I started with was signed by Altman among the first.) On May 22, Altman and two other leading OpenAI employees and co-founders published a column on the company’s website where they write that in 10 years AI will surpass humans in most areas of knowledge. It will be not just AI and not even AGI (as they usually call AI, which is comparable to human intelligence), but superintelligence – a superintelligence.

On June 6, Sam Altman made it to Abu Dhabi, where he attended another conference on AI control. The conference was opened by Andrew Jackson. He is associated with Group 42, a subsidiary of DarkMatter. DarkMatter is, in full accordance with its name, a rather “dark” company, both in its goals and methods. In fact, all of its orders come from not the most democratic government of the UAE. The CIA is very interested in the work of DarkMatter. DarkMatter is suspected of electronic surveillance and hacking. The company hires former CIA and NSA (National Security Agency) employees and even an Israeli contingent for an Arab country for good money. AP cites Andrew Jackson as saying, “We are a political force, and we will play a central role in regulating AI.” “We” is who? DarkMatter? The UAE government? Well, why not? If an international regulatory body, something like the IAEA, is being created, then we have to cooperate and share information, including with DarkMatter. That’s the way it is. Except that it seems that Altman was looking for other allies.

Several questions arise.

Where do AI developers get such a desire to fall into the arms of the state?

The longer the hype lasts, the better for AI developers. No one (but mainly the shareholders) asks the companies and technologies at the top of the hype what they are doing, and when the payoff will be. And as of today, the payoff is not obvious. The cost of a ChatGPT request by various estimates costs something like 30-40 cents. That’s 500 to 1,000 times the cost of a standard Google query. It costs Daddy Dorset, that is, Microsoft (the main investor in OpenAI), something like million a day. The more requests, the greater the direct loss. The more requests, the higher the capacity of the data centers, and those are very expensive NVIDIA chips. But Microsoft wouldn’t be itself if it didn’t count the money. There are different ways to monetize the hype, from developing your own chips to actively earning money from cloud services.

Sam Altman says that OpenAI is the most “investable” startup in history. It needs 100 billion (that’s right, billions, not millions) to get started. Where can you get it? The government is a very good donor. Let them invest heavily in AI, but not just any kind, but the “safe” kind. We all love security and are willing to pay for it. So far, the U.S. government steps into the industry with a modest $140 million. But that’s for now.

Sam Altman says: Those companies that don’t use AI will lose. And companies are afraid to be too late and start using AI, not really understanding what they are doing and why they need it. As a result, prices for cloud services are going up. For example, for Microsoft Azure services. Once the hype subsides, cloud prices will drop. ChatGPT requests will become routine, but they won’t become 1,000 times cheaper. Azure services will get cheaper. It’s a crisis. Probably not as steep as the dot-com explosion of 2000, but at least as steep as the Metaworld crisis. Do we still remember what that is?

The meta-universes are the illegitimate child of the pandemic. Lockdowns have made humans, not only unlivable, but even unlivable. And man needs man. And the zoom is not enough for him. The pandemic was over. People are out of the room. The meta-universes are over. At least for now.

For technology of the caliber its creators describe to shoot out and then not to fall down, it must meet some basic human need. Cell phones have brought people closer. Social networks have made people even closer. As a result, the telephonization of Africa literally went off in some short time. Electricity made it possible to transmit energy to where it was needed. James Clerk Maxwell paid the price for all basic science for a thousand years to come. He changed our lives.

What basic human need does artificial intelligence meet? The developers answer: AI will change everything. It will abolish poverty, solve problems with the climate, with energy, with health care, and everyone will be happy (for example, this interview describes it all very vividly). This is, to put it mildly, a bit vague. Somehow it strongly resembles either the philosopher’s stone or the elixir of immortality. This is hard to believe for the simplest reason: mankind has never encountered anything like this in its entire history. In general, so far, the plushies do not look very convincing.

I know one basic human need that AI is already helping to solve, but it is unlikely to be of much interest to the masses: it is the need for cognition. AI and, above all, multilayer neural networks have provided another way of processing data that is much closer to human thinking than traditional programming. But it is not a need for communication or a need for warmth. Humans need to live first, and then some may have the urge to think. At leisure. Aristotle generally says that science is the child of leisure. And rightly so. Science requires the freedom of the mind.

If it’s not clear what AI will give, then let’s tell everyone what it will take away (unless, of course, it is used incorrectly and given 100 billion dollars by OpenAI, who knows exactly how to get it right). Then it’s simple: if you don’t agree with AI, there will be a pandemic halfway through a nuclear war. And that’s where the regulators have to be called, of course. Not only because they will give money, but also because they will share responsibility if something goes wrong. And the developers will groan and groan: well, how could they not watch out, but we warned them. After all, their hands are a little shaky. For real.

What are the dangers of AI seen right today?

Millions unemployed? The only mass AI application today is ChatGPT. The only industry where ChatGPT is used in earnest is in programming. Programmers have been working since ChatGPT 3. That’s the year 2022. By today’s standards, that’s a long time ago. (I’m not referring to those who make the tool, they’ve been working on AI for about 70 years now, but those who use it.) Something I haven’t heard about mass layoffs in IT. On the contrary, there are plenty of vacancies. The most humble beginner with a basic knowledge of Python: they’ll tear him off with their hands. So no reductions are not expected. Although the automation of workplaces, which Goldman Sachs experts write about, is obvious, and the efficiency of the programmer’s work is growing before our eyes. But here’s the reaction of bioinformatician Xijin Ge, who has been taking a close look at ChatGPT’s capabilities specifically in writing code. Ge says some pretty deprecating words: you should treat this AI as an intern – hard-working, eager to please everyone, but inexperienced and often making mistakes.

What has AI given to programming? Hope. Hope that a dream that is 70 years old will come true. Deep learning algorithms can work well, or they can work poorly. But they will still work badly. And a classical program either works, or it doesn’t. It’s too fragile. It’s the kind of technology we built modern civilization on. As Gerald Weinberg said, if builders built houses the way programmers write programs, the first fly-in woodpecker would destroy civilization. Weinberg only failed to specify that it wasn’t the programmers’ fault. They did the best they could. It’s just not enough. You need something more reliable. This is exactly what AI promises. Why should humanity disappear as a result?

Dipfakes? This is really serious. Not even because everything will sink in fakes, as Jeffrey Hinton, one of the founding fathers of modern AI, says, but because already today we are all amicably afraid of it. Fear is a very bad assistant.

Tell me, honestly, do you really believe everything they write on the… I mean, the Internet? No, I don’t. Not what’s written, not what’s drawn, not what’s on video. We don’t believe what we hear, we believe who’s talking. For example, our neighbor Uncle Kolya (or our friend feed). As Mrs. Hudson used to say, “That’s what the Times says. Or Reuters, or AFP, or Nature, or Science, or… Aren’t they wrong? Yes, they do. They never lie? They can be. But the cost of being wrong is high. If all these brands risk their reputations, then Uncle Kolya risks getting hit in the cheekbone: also, in general, a sensitive price for a fake. In other words, it will be information that has been firmly shaken and shaken. It can be taken seriously. And if it was written by someone in FB with an empty profile – let him say the absolute truth, we will not believe him and will not check his information, because life is short and there is no strength to refute every chatterbox.

Recently someone posted on Twitters a “picture of the Pentagon exploding. The picture appears to have been taken by an AI. A hundred thousand fools reposted it, although a quick glance at this “photo” is enough to understand that it is a fake, and a crude one, too. But a hundred thousand fools is power. The Pentagon spokesman came out and said it was nothing like that, and the Arlington fire inspector came out and said it was nothing like that. Everybody somehow calmed down and forgot about it. The interesting thing is that the stock index fell slightly, amid all this noise. Not by much, 0.29%, but it was down. Not for long. Then it rebounded. What happened? The traders’ machines follow the news. They caught that noise. If they had taken the news seriously, the indices would have plummeted. But the machines quickly figured it out and got the indexes back on track. They turned out to be ready for such a crude fake.

Before we get on the shaky ground of “what happens if…”, where we are dragged literally by the hair of serious people – the developers of the AI, two words about reality. One bomb falling on a peaceful city, one bullet stopping a living heart, caused incomparably more damage than all the AI we have today.

And I wouldn’t throw around comparisons of AI to nuclear war and pandemics. When Andrew Jackson, associated with DarkMatter, spoke at the Abu Dhabi conference, it somehow became clear why no IAEA analogue for AI control would work. The IAEA works with large and heavy (in the truest sense) facilities: uranium mines, plants for weapons-grade plutonium, rivers for cooling nuclear power plants… It’s all pretty easy to see right from space. But how do you see an AI system? Especially at the first stage, when it does not yet need much power to be active. And it turns out that the “dark” companies are already ready to take part in the “control”, but how to control them? So far, the CIA has not been very convincing. The WHO estimates that at least 20 million people have died from the pandemic. Not to mention the over-infected, the longcode. That’s so much pain and grief, it’s not up to AI at all. And what the developers themselves say about AI are predictions, either more or less likely.

So what would happen if the “Pentagon explosion” was not a crude fake, but a subtle and deep system of disinformation, calculated that the trading machines would not recognize it, and the indices would fall in earnest. And such a fake would be very expensive. It won’t be done in five minutes. It would have to at least fool Reuters and AFP. Exactly “and.” The information must come from several independent sources, which have their own sources (let’s assume that they do not rewrite each other’s news, or at least they shouldn’t). So they will have to be persuaded. And they actually know how to do it the old-fashioned way: pick up the phone and call the City of Arlington Fire Department, and (just to be safe) on an analog channel. (Oddly enough, wired analog phones still exist, and analog encryption systems are taken seriously by serious people. Here, for example, quantum cryptography is analog).

If information is easy to get (molded on the fly at the knees), then it is cheap and easy to replicate. But it is inconclusive, no matter how many naive users repost it. If AI floods everything with such cheap dipshits, they will simply stop being perceived as meaningful information, and will look like trivial email spam. Does it bother you much today? I don’t. Twenty years ago it was a serious problem. In order to convince anyone of anything at all, these dipshakes will have to be seriously invested in, and there’s no guarantee that they will work. And then everything will be the same as always: a high risk of losing the investment. Someone will take a risk. But the price will only go up. As it is today. As it always has been.

But the problem of dipfakes is, of course, very complicated. It has a lot of subtle points. Already today we can see that, as in the cases of spam or computer viruses, both the sword (AI fake generator) and the shield (AI detector) will develop. And it is this problem that is the main one today. The risk here is quite concrete. There are concrete solutions here, but that’s a separate conversation.

Why are the masses so sluggish in their response to this horror-horror-horror?

And why, in fact, are the people silent? We don’t see any demonstrations against the AI. Well, maybe Hollywood screenwriters have spoken up: they are very careful about their intellectual property and are afraid that the AI will take away their piece of bread (they call it the “plagiarism” problem). Maybe they’re afraid for a reason.

Why did people smash the 5G towers, why did the videos about Bill Gates chipping everybody get millions of views? In fact, there was a whole movement of anti-vaxxers: not a hundred people, not a thousand, but millions around the world. Where are the concerned citizens against AI? Isn’t AI, according to OpenAI, far more dangerous than vaccines? Why are the developers themselves, or government officials and congressmen, or Hollywood screenwriters in favor of controlling AI today?

Everything was clear about chipping and vaccines. Not because anyone understood anything about how it worked or how 5G was different from 4G, but because it was really scary. The whole brew was bubbling on the black fire of a pandemic. The virus is an invisible killer. How do you fight it? If you just tear the tower apart, you’ll feel better. At least you did something useful. And what is it: 5G or 4G – who’s counting?

As for AI, everything is unclear. Those who warn of its danger today have not offered a single convincing scenario of how exactly it will destroy humanity. Skynet, perhaps, or Legion? It’s okay, folks, it’s going to be OK. Schwarzenegger will protect us.

Why is Schwarzenegger a sufficient defense against AI from the point of view of the masses? Because all the global dangers that both developers and officials talk about today ultimately boil down to a mental experiment: what happens if… That is, to a kind of science fiction. Like gray goo. I mean, it’s been feared recently, too. Somehow it dissipated.

Why did this particular conversation about the dangers of AI arise today?

So what happened? Why now and with such force? Why didn’t anyone say anything like this until a couple of months ago? Actually, they did. And they often did. It’s just that no one heard this mumbling to themselves. But on March 14, ChatGPT 4 came out. The progress between ChatGPT 3.5 and ChatGPT 4.0 is so big, that many people gasped. About 100 million users noticed the jump. There was a hypothetical scenario: if it continues to progress like this, we’ll lose control over it. And when we do, it will be too late. Too late for what? What will we “lose control” of? It doesn’t matter. Then came the letter from Musk and comrades. Then the other letters I mentioned. Then the bureaucrats came along.

Jeffrey Hinton said in an interview with the NYT: “There’s a possibility that what’s going on in these systems far exceeds the complexity of processes in the human brain. Look at what was happening five years ago and what’s happening now. Imagine the speed of change in the future. It’s frightening.”

There is a second reason for fear here, besides speed: the fact that we don’t understand how it works is fine, but chances are we never will, because a simpler system (the biological brain) simply cannot make sense of a more complex one (like superintelligence).

New and old scripts of the end of the world using AI have begun to gain traction. How plausible are they? Maybe that’s worth discussing. So far, Bill Gates’ view is closest to mine. He wrote simply: here’s a graphical interface that came along a long time ago. And it completely changed the way computers and people interacted. Now there are Big Language Models: GPT, for example. They’re going to change the computer-human interaction, too. That’s great and cool. What’s the end of the world? Or, conversely, philosophical stones? What do you mean?

Could it be that Gates is missing something? Does he underestimate the global nature of change? He sees everything. It’s just that he has been directly involved in so many computer revolutions that he not only sees the entrance to the new wave, but also the exit. And I trust his vast experience. Because I don’t see any catastrophic scenarios myself, not just to tease the public, but seriously.

But the world is unpredictable. And we are constantly making sure of that. However, GPT 4.5 is promised in September, and GPT 5.0 in January at the latest. A lot will become clearer.

Vladimir Gubailovskii 10.06.2023